Barbara, the Kubernetes of the Edge

As the microservice trend grows, the use of containers is spreading. To scale its deployment, it is necessary to orchestrate the entire process and this is where Kubernetes, a trendy tool among DevOps and a reference in the cloud world in recent years, emerges.

Until now, companies have built their applications, one on top of the other, in monolithic systems according to their new business needs, which they deployed on-premise or, in recent years, in the Cloud. The classic definition of a monolithic application refers to a set of fully coupled components that have to be developed, deployed and managed as a single entity. Practically boxed into a single process that is very difficult to scale, the only way to do it: vertically by adding more CPU (memory).

In contrast, the concept of microservices has recently emerged, which allows the encapsulation in containers of several small applications that can communicate with each other to provide joint functionality.

Nowadays, everything seems to be moving towards dockerisation. Dockerisation, as it is popularly referred to as packaging a software application to be distributed and executed through the use of containers.

With this new system, it is not necessary to modify everything at once, as we can upgrade each of these containers separately and replace them 'hot' in the production environment, with the advantages that this brings when it comes to scaling.

As the number of microservices in our system grows exponentially, their management becomes more complicated, as we need a coordination system for the deployment, monitoring, replacement, automatic scaling and, ultimately, administration of the different services that make up our distributed architecture.

To solve this challenge Google introduced Kubernetes in 2014, which has become the de facto standard for implementing and deploying distributed applications.

What is Kubernetes and how does it work?

Kubernetes is open source software for deploying and managing large-scale containers. Kubernetes facilitates automation and declarative configuration and helps us decide on which node each container will run, based on resource requirements and other constraints. In addition, we can mix critical and best-effort workloads in order to boost resource savings, deployments and automatic rollbacks.

Kubernetes builds on Google's experience running large-scale production applications for more than a decade, along with best ideas and practices from the community.

Kubernetes orchestrates compute, networking and storage infrastructure so that user workloads don't have to. This offers the simplicity of Platform-as-a-Service (PaaS) with the flexibility of Infrastructure-as-a-Service (IaaS) and enables portability between infrastructure providers.

How Kubernetes works

As applications grow to span multiple containers deployed on multiple servers, managing them also becomes increasingly complex. To control this complexity, Kubernetes provides an open source API that controls how and where those containers run.

Kubernetes organises clusters of virtual machines and schedules containers to run on those machines based on available processing resources and the resource requirements of each container. Containers are grouped into pods (the basic operating unit of Kubernetes) that can be scaled to the desired state.

Kubernetes also automatically manages service discovery, incorporating load balancing, and tracks resource allocation and scales resources based on throughput usage. In addition, it checks the health of individual resources and allows applications to recover automatically by restarting or replicating containers.

Edge Computing, Artificial Intelligence and applications

Edge computing is a computing model that consists of processing data at the edge of the network. That is, at nodes much closer to where the data is captured.

This model coupled with Artificial Intelligence is in full expansion in industrial sectors and is already considered to be one of the technological trends with the most momentum in 2022 as part of industrial digitalisation strategies: Artificial Intelligence at the Edge (known as Edge AI). This technology focuses on the deployment of algorithms close to where the data themselves originate and are used for their calculations. Edge nodes are used for this purpose, which are placed and connected locally to the data sources themselves.

Sectors such as the Electricity Distribution or the Water Industry are immersed in processes of digital transformation of a large part of their business and Edge AI is an enabler for these processes to be carried out. It is in these environments where, for the development and deployment of data exploitation algorithms, the use of containers has become increasingly widespread.

However, working with containers in distributed and often remote environments, as proposed by Edge Computing or Edge AI models, requires a tool that allows control of the entire lifecycle of the edge nodes and the intelligence running on them.

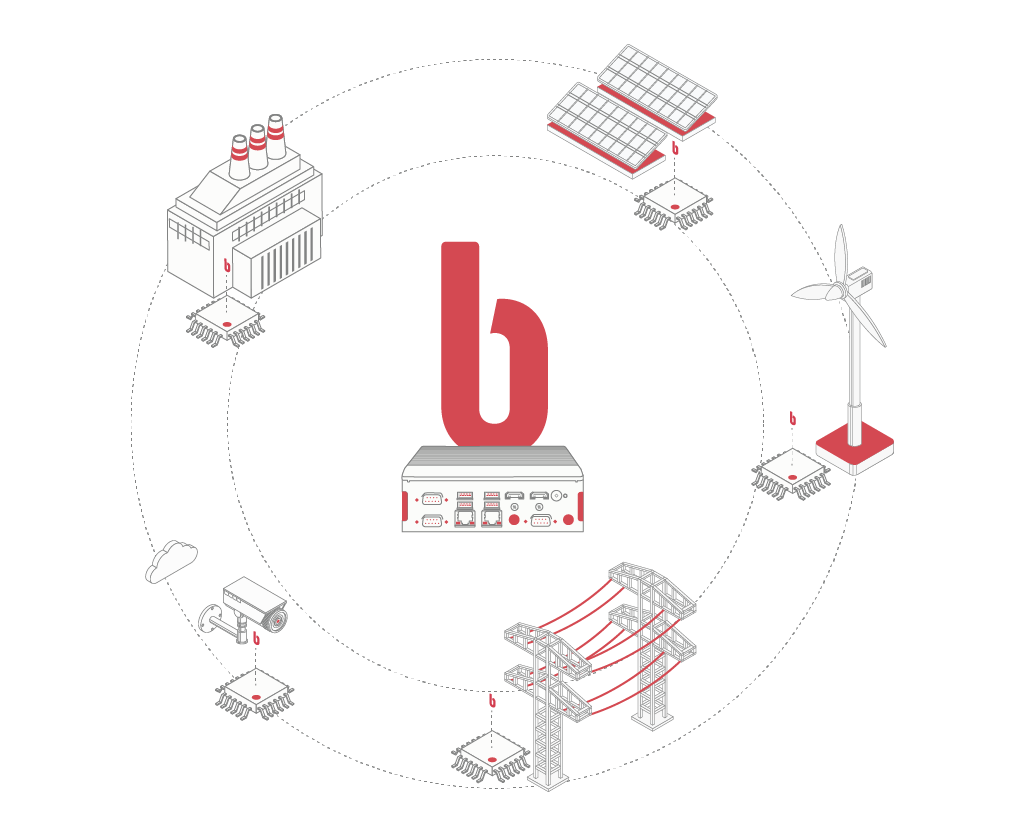

Barbara as an application orchestration platform on the Edge

As containerised applications and computing extend to the edge, the same management need for scaling that Kubernetes solves in the cloud arises. Lightweight Kubernetes distributions are now required for edge computing, as more and more organisations push workloads closer to the data generating applications and devices.

Barbara, the cyber-secure industrial edge platform, enables organisations to run containers on the edge to maximise resources and facilitate testing, as well as providing the ability for DevOps teams to move faster and more efficiently.

With a strong focus on what organisations need to know from a platform, network and infrastructure perspective, Barbara is positioned as a leader in application orchestration at the edge.

Barbara Edge is able to orchestrate containers across multiple hosts, make better use of hardware to maximise the resources needed to run business applications, and control and automate application deployments and upgrades.

How to work with Barbara in distributed environments such as the Edge

To speed up the work of deploying and running applications on the Edge on a large scale, it is essential to have a tool such as Barbara that allows at least the following actions to be performed securely:

- Deploy all required containers on one or multiple Edge nodes at once

- Orchestrate the concurrent execution of all algorithms and other applications running on those devices

- Know what is happening throughout the process, through log display screens using telemetry graphs and log display screens.

- Update the firmware and system software of Edge nodes to stay protected against cybersecurity vulnerabilities

With the Barbara platform, companies can securely govern all distributed intelligence on Edge nodes, facilitating the deployment, debugging and updating of applications that teams of Data Scientists have developed.

Why use Barbara?

Keeping containerised applications running can be complex, because they often include many containers deployed on different machines. Barbara allows you to push those containers to the desired state and manage their lifecycles in a portable, scalable and extensible way.

In addition, Barbara allows you to add full control over development, operations and security, allowing you to deploy upgrades faster, without compromising security or reliability, and to save time in infrastructure management, which equals cost savings.

If you are working on deploying applications on the Edge in industrial environments and want us to show you how our platform can help you in the process, don't hesitate to ask us for a demo.